Loading course…

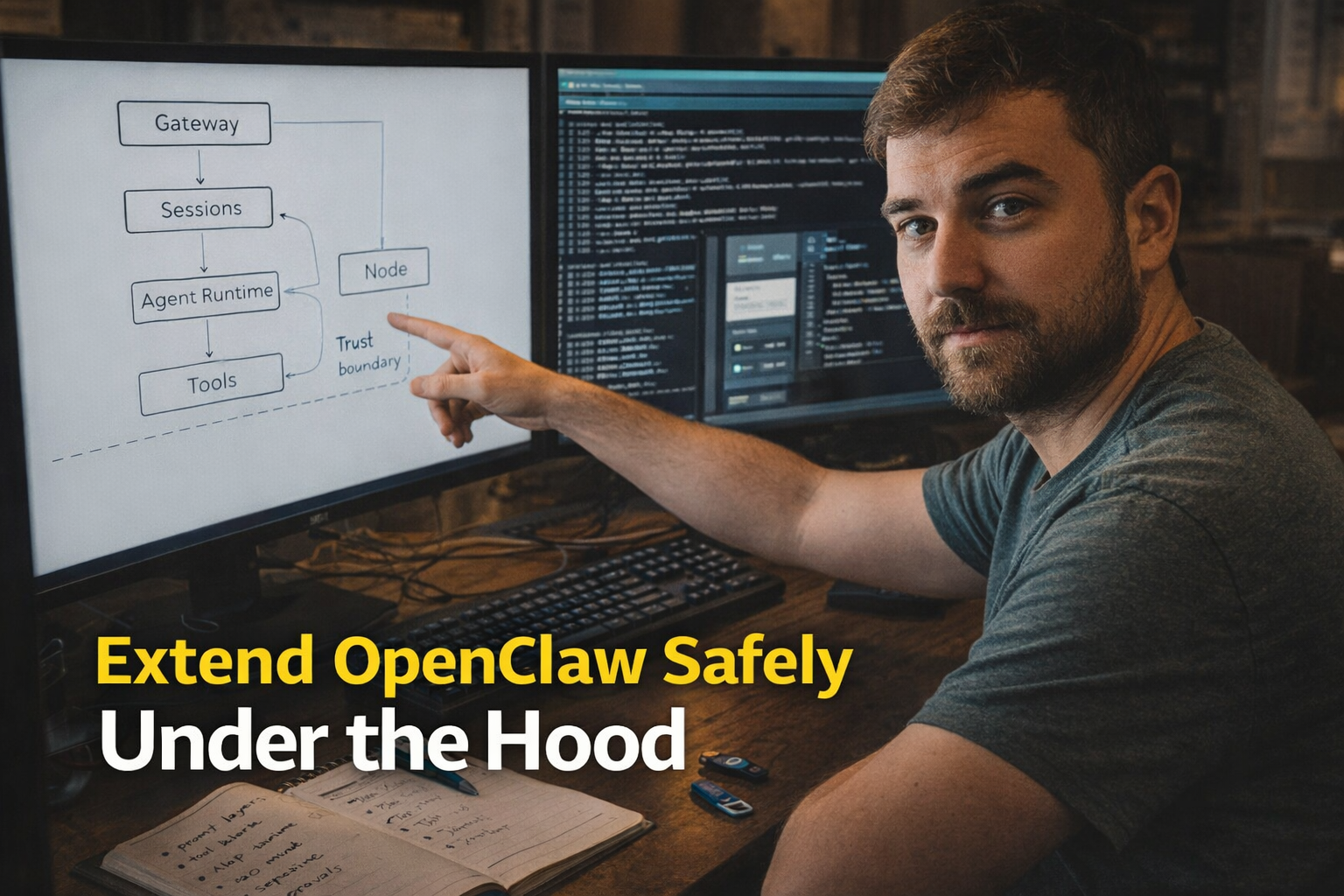

Extend OpenClaw Safely Under the Hood

Created by Shaunak Ghosh

Build an under-the-hood mental model of OpenClaw’s control plane, prompt/tool execution loop, and extension surfaces so you can add capabilities without collapsing trust boundaries. You’ll learn when to use SKILL.md vs plugins/hooks vs MCP-style bridges, and how to enforce sandboxing, tool policy, approvals, and observability in real deployments.

Requirements

- Comfort with event-driven systems (HTTP/WebSockets, adapters, routing)

- Working knowledge of LLM tool/function calling (schemas, tool results, iterative loops)

- Security fundamentals: least privilege, network exposure, supply-chain risk

- Ability to read/edit Markdown/YAML-like config and interpret logs

What you'll learn

- Trace message flow from channel adapter to gateway session routing, through the LLM tool-call loop to side-effecting execution.

- Predict when a tool is callable based on prompt injection, skill loading, and structured tool exposure, and budget context costs accordingly.

- Author SKILL.md-style instructions that are concise, tool-oriented, and verifiable, and choose when to enforce behavior via plugins/hooks instead.

Learning path

7 modules • Each builds on the previous one

OpenClaw Gateway and Node Architecture

Build a precise mental model of the Gateway as the long-lived control plane that owns channel sessions, and nodes as capability-advertising devices that execute privileged actions under pairing and policy boundaries.

OpenClaw System Prompt Internals

Understand how OpenClaw assembles a compact system prompt (tools, safety, workspace, skills list) and why tool availability is determined by both prompt text and structured tool schemas sent to the model.

OpenClaw Tool Orchestration Patterns

Learn the reliable “status → snapshot/describe → act → verify” patterns for browser, canvas, cron, and node-targeted tools, with emphasis on minimizing irreversible actions and improving determinism.

OpenClaw SKILL.md Authoring Internals

Master how SKILL.md metadata and instructions shape agent behavior, including how to write concise, tool-oriented procedures that avoid ambiguity and reduce prompt-injection surface area.

OpenClaw Plugin Extension Architecture

Understand plugins as in-process Gateway extensions that can register tools, RPC, commands, and bundled skills, and learn the decision framework for when a capability belongs in a skill, plugin, or external tool bridge.

OpenClaw Sandboxing and Exec Approvals

Learn how sandboxing, tool allow/deny policy, and exec approvals combine into hard enforcement layers that bound blast radius even when the model is confused, tricked, or maliciously prompted.

OpenClaw Debugging and Operational Hardening

Develop a production-grade debugging loop using diagnostics (doctor), logging levels, and targeted status probes to isolate failures in gateway runtime, channels, skills eligibility, nodes, and sandbox policy.

Begin your learning journey

Science-backed learning

In-video quizzes and scaffolded content to maximize retention.