Loading course…

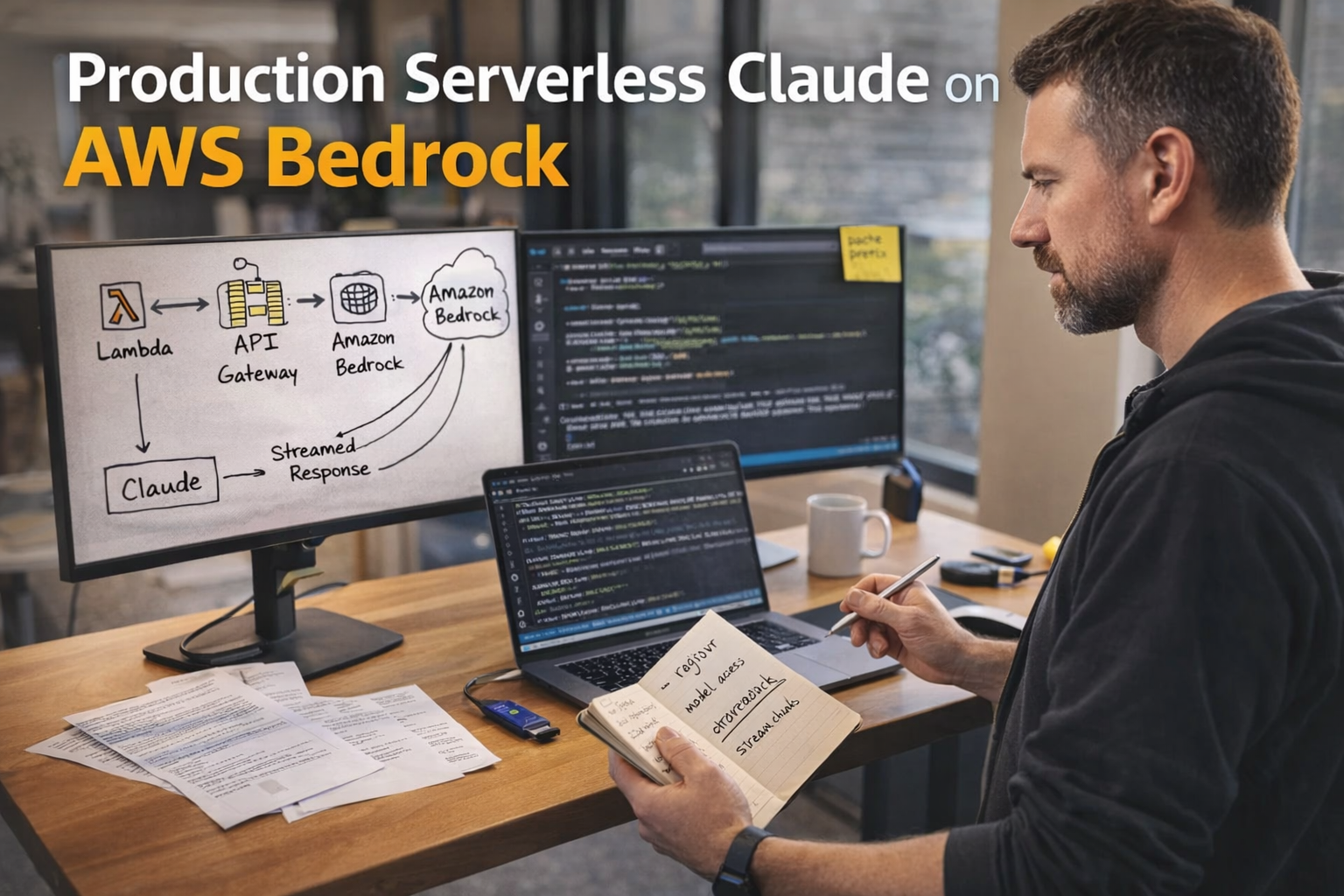

Production Serverless Claude on AWS Bedrock

Created by Shaunak Ghosh

Deploy Claude on Amazon Bedrock with a serverless mindset: eliminate access and region blockers, invoke Claude reliably (including streaming), and integrate Lambda-based tool patterns safely. You’ll finish with a concrete cost/latency optimization lever—prompt caching—so you can reason about unit economics before scaling traffic.

Requirements

- AWS IAM roles, policies, and least-privilege design

- AWS Lambda and API Gateway request/response patterns

- Using AWS SDKs (boto3/SDK equivalents) in production services

- Basic observability practices (logs, traces, correlation IDs)

What you'll learn

- Preempt and diagnose Bedrock-Claude enablement failures by validating region alignment and per-region model access before deployment.

- Implement a Claude invocation path using AWS SDK patterns, including correct request serialization, response parsing, and streaming event consumption.

- Design a serverless integration interface for model-driven tool execution using Lambda action groups with explicit control boundaries and IAM as the contract.

Learning path

4 modules • Each builds on the previous one

Bedrock Claude access and IAM

Enable the right Anthropic Claude model(s) in Amazon Bedrock and design least-privilege IAM so serverless runtimes can invoke them reliably across environments. You’ll also learn the practical failure modes behind access-denied, region mismatch, and quota-related errors so you can diagnose them quickly.

Invoke Claude with Converse APIs

Use the current Amazon Bedrock runtime interface for Claude by understanding how Converse and ConverseStream structure messages, outputs, stop reasons, and token usage. You’ll learn how to choose between consistent Converse APIs and legacy model-specific invocation paths based on portability and feature needs.

Serverless integration patterns on AWS

Map Bedrock-Claude invocations into serverless architectures using Lambda and API Gateway, including synchronous chat endpoints and asynchronous/batch patterns for long-running workloads. You’ll focus on production best practices: throttling, retries, idempotency, observability, and safe multi-tenant design.

Streaming responses and cost optimization

Implement end-to-end streaming for Claude responses and understand where buffering or limits can occur across Bedrock streaming APIs, Lambda response streaming, and API Gateway response streaming. Then apply Bedrock cost levers—prompt caching, batch inference, and provisioned throughput—by mapping each to workload shapes and measurable token/latency outcomes.

Begin your learning journey

Science-backed learning

In-video quizzes and scaffolded content to maximize retention.